An Introduction to Systematic Reviews by David Gough Chapter 5

- Commentary

- Open Access

- Published:

Clarifying differences betwixt review designs and methods

Systematic Reviews book 1, Article number:28 (2012) Cite this article

Abstract

This newspaper argues that the current proliferation of types of systematic reviews creates challenges for the terminology for describing such reviews. Terminology is necessary for planning, describing, appraising, and using reviews, building infrastructure to enable the conduct and use of reviews, and for further developing review methodology. In that location is insufficient consensus on terminology for a typology of reviews to be produced and any such attempt is likely to be express by the overlapping nature of the dimensions along which reviews vary. Information technology is therefore proposed that the almost useful strategy for the field is to develop terminology for the main dimensions of variation. Iii such main dimensions are proposed: (1) aims and approaches (including what the review is aiming to achieve, the theoretical and ideological assumptions, and the use of theory and logics of assemblage and configuration in synthesis); (ii) structure and components (including the number and blazon of mapping and synthesis components and how they relate); and (3) breadth and depth and the extent of 'work done' in addressing a research outcome (including the latitude of review questions, the detail with which they are addressed, and the corporeality the review progresses a enquiry calendar). This then provides an overarching strategy to cover more than detailed descriptions of methodology and may atomic number 82 in time to a more overarching system of terminology for systematic reviews.

Background

Research studies vary in many means including the types of research questions they are asking, the reasons these questions are being asked, the theoretical and ideological perspectives underlying these questions, and in the research methods that they utilize. Systematic reviews are a form of research; they are (and the theoretical and ideological perspectives underlying these methods) a way of bringing together what is known from the inquiry literature using explicit and accountable methods [1]. Systematic methods of review accept been successfully adult specially for questions apropos the bear upon of interventions; these synthesize the findings of studies which utilise experimental controlled designs. Yet the logic of systematic methods for reviewing the literature can be applied to all areas of research; therefore there tin can be as much variation in systematic reviews as is plant in chief research [2, 3]. This paper discusses some of the important conceptual and applied differences between different types of systematic review. It does not aim to provide an overall taxonomy of all types of reviews; the rate of development of new approaches to reviewing is as well fast and the overlap of approaches as well great for that to be helpful. Instead, the paper argues that, for the present at least, it is more than useful to place the key dimensions on which reviews differ and to examine the multitude of unlike combinations of those dimensions. The newspaper besides does not aim to draw all of the myriad actual and potential differences between reviews; this would be a job too big even for a volume permit alone a paper. The focus instead is on three major types of dimensions of difference. The kickoff dimension is the aims and approaches of reviews; particularly in terms of their methodologies (their ontological and epistemological foundations and methods of synthesis). The second dimension is the structure and components of reviews. The third dimension is the latitude, depth, and extent of the work done by a review in engaging with a research outcome. Once these three aspects of a review are clear, consideration can be given to more specific methodological issues such as methods of searching, identifying, coding, appraising, and synthesizing bear witness. The aim of this paper is to clarify some of the major conceptual distinctions between reviews to aid the pick, evaluation, and development of methods for reviewing.

Clarifying the nature of variation in reviews

As forms of inquiry, systematic reviews are undertaken co-ordinate to explicit methods. The term 'systematic' distinguishes them from reviews undertaken without clear and answerable methods.

The history of systematic reviews is relatively recent [iv, 5] and despite early piece of work on meta-ethnography [half-dozen], the field has been dominated by the development and application of statistical meta-analysis of controlled trials to synthesize the show on the effectiveness of wellness and social interventions. Over the past ten years, other methods for reviewing accept been developed. Some of these methods aim to extend effectiveness reviews with information from qualitative studies [7]. The qualitative information may exist used to inform decisions made in the statistical synthesis or be part of a mixed methods synthesis (discussed later). Other approaches take been developed from a perspective which, instead of the statistical aggregation of data from controlled trials, emphasize the central function that theory can play in synthesizing existing enquiry [8, nine], accost the complication of interventions [x], and the importance of understanding research within its social and paradigmatic context [11]. The growth in methods has not been accompanied by a clear typology of reviews. The effect is a complex web of terminology [2, 12].

The lack of clarity almost the range of methods of review has consequences which can limit their development and subsequent apply. Noesis or consensus about the details of specific methods may be lacking, creating the danger of the over-generalization or inappropriate application of the terminology being used. Also, the branding of unlike types of review can lead to over-generalizations and simplification with assumptions being made about differences between reviews that only apply to particular stages of a review or that are matters of degree rather than absolute differences. For example, concepts of quality assurance can differ depending upon the nature of the research question existence asked. Similarly, infrastructure systems developed to enable the amend reporting and disquisitional appraisement of reviews, such as PRISMA [thirteen], and for registration of reviews, such every bit PROSPERO [14] currently apply predominantly to a subset of reviews, the defining criteria of which may not be fully clear.

A further trouble is that systematic reviews have attracted criticism on the assumption that systematic reviewing is applicable but to empirical quantitative research [xv]. In this way, polarized debates virtually the utility and relevance of different research paradigms may further complicate terminological issues and conceptual understandings near how reviews actually differ from one some other. All of these difficulties are heightened because review methods are undergoing a menstruum of rapid development and so the methods being described are ofttimes being updated and refined.

Noesis almost the nature and strengths of dissimilar forms of review is necessary for: advisable choice of review methods by those undertaking reviews; consideration of the importance of different problems of quality and relevance for each stage of a review; appropriate and accurate reporting and accountability of such review methods; interpretation of reviews; commissioning of reviews; development of procedures for assessing and undertaking reviews; and development of new methods.

Clarifying the nature of the similarities and differences between reviews is a first step to avoiding these potential limitations. A typology of review methods might be a solution. At that place are many various approaches to reviews that can be easily distinguished, such equally statistical meta-analysis and meta-ethnography. A more than detailed examination, however, reveals that the types of review currently described oftentimes accept commonalities that vary across types of review and at dissimilar stages of a review. Iii of these dimensions are described here. Exploring these dimensions too reveals how reviews differ in degree along these overlapping dimensions rather than falling into clear categories.

Review aims and approaches

Primary inquiry and research reviews vary in their ontological, epistemological, ideological, and theoretical stance, their research paradigm, and the issues that they aim to address. In reviews, this variation occurs in both the method of review and the type of chief research that they consider. As reviews will include master studies that address the focus of the review question, information technology is not surprising that review methods also tend to reverberate many of the approaches, assumptions, and methodological challenges of the master research that they include.

1 indication of the aim and approach of a study is the research question which the study aims to reply. Questions commonly addressed past systematic reviews include: what is the effect of this intervention (addressed by, for example, the statistical meta-assay of experimental trials); what is the accurateness of this diagnostic tool (addressed by, for example, meta-assay of evaluations of diagnostic tests); what is the cost of this intervention (addressed by, for case, a synthesis of cost-benefit analyses); what is the meaning or procedure of a phenomena (addressed past, for example, conceptual synthesis such as meta-ethnography or a critical interpretative synthesis of ethnographic studies); what is the effect of this complex intervention (addressed by, for case, multi-component mixed methods reviews); what is the event of this approach to social policy in this context (addressed by, for example, realist synthesis of evidence of efficacy and relevance beyond different policy areas); and what are the attributes of this intervention or activity (addressed by, for example, framework synthesis framed by dimensions explicitly linked to particular perspectives).

Although different questions drive the review procedure and suggest different methods for reviewing (and methods of studies included) there is considerable overlap in the review methods that people may select to answer these questions; thus the review question alone does non provide a complete basis for generating a typology of review methods.

Role of theory

There is no agreed typology of research questions in the health and social sciences. In the absence of such a typology, ane way to distinguish research is in the extent that it is concerned with generating, exploring, or testing theory [sixteen].

In addressing an bear on question using statistical meta-analysis, the arroyo is predominantly the empirical testing of a theory that the intervention works. The theory beingness tested may be based on a detailed theory of alter (logic model) or be a 'black box' where the mechanisms by which change may exist affected are not articulated. The review may, in addition to testing theory, include methods to generate hypotheses about causal relations. Testing oftentimes (though not ever) wants to add up or amass data from large representative samples to obtain a more precise guess of upshot. In the context of such reviews, searching aims to identify a representative sample of studies, usually by attempting to include all relevant studies in society to avert bias from study selection (sometimes called 'exhaustive' searching). Theoretical work in such analyses is undertaken predominantly before and after the review, not during the review, and is concerned with developing the hypothesis and interpreting the findings.

In enquiry examining processes or meanings the approach is predominantly about developing or exploring theory. This may not require representative samples of studies (as in aggregative reviews) but does crave variation to enable new conceptual understandings to exist generated. Searching for studies in these reviews adopts a theoretical approach to searching to place a sufficient and appropriate range of studies either through a rolling sampling of studies co-ordinate to a framework that is developed inductively from the emerging literature (alike to theoretical sampling in chief research) [17]; or through a sampling framework based on an existing body of literature (akin to purposive sampling in primary research) [eighteen]. In both primary research and reviews, theoretical work is undertaken during the process of the inquiry; and, just as with the theory testing reviews, the nature of the concepts may be relatively uncomplicated or very complex.

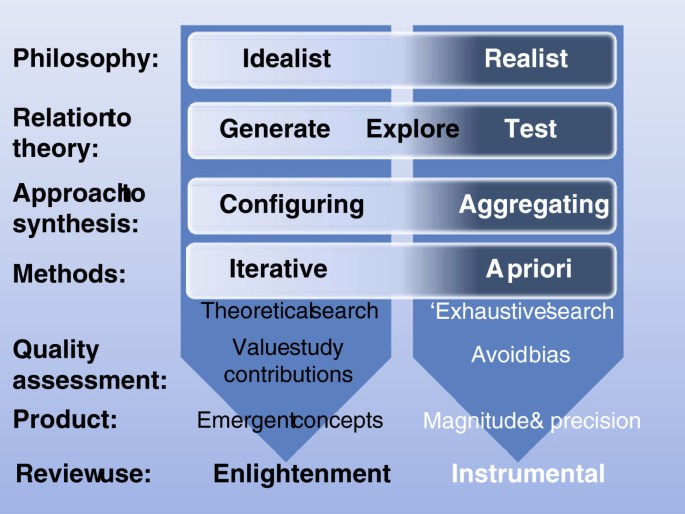

Aggregative and configurative reviews

The stardom betwixt enquiry that tests and enquiry that generates theory also equates to the distinction between review types fabricated by Voils, Sandelowski and colleagues [nineteen, 20] (although nosotros accept been very influenced by these authors the detail of our use of these terms may differ in places). Reviews that are collecting empirical data to describe and test predefined concepts tin can be idea of as using an 'aggregative' logic. The primary research and reviews are adding upward (aggregating) and averaging empirical observations to make empirical statements (within predefined conceptual positions). In contrast, reviews that are trying to interpret and understand the globe are interpreting and arranging (configuring) information and are developing concepts (Effigy 1). This heuristic also maps onto the way that the review is intended to inform cognition. Aggregative research tends to be well-nigh seeking evidence to inform decisions whilst configuring enquiry is seeking concepts to provide enlightenment through new ways of understanding.

Continua of approaches in aggregative and configurative reviews.

Aggregative reviews are oft concerned with using predefined concepts and and so testing these using predefined (a priori) methods. Configuring reviews tin can be more exploratory and, although the basic methodology is determined (or at to the lowest degree causeless) in advance, specific methods are sometimes adapted and selected (iteratively) every bit the research gain. Aggregative reviews are probable to be combining like forms of data and so be interested in the homogeneity of studies. Configurative reviews are more likely to be interested in identifying patterns provided past heterogeneity [12].

The logic of aggregation relies on identifying studies that support one another and so requite the reviewer greater certainty about the magnitude and variance of the phenomenon under investigation. As already discussed in the previous section, the approach to searching for studies to include (the search strategy) is attempting to exist exhaustive or, if not exhaustive, then at least fugitive bias in the way that studies are found. Configuring reviews have the different purpose of aiming to find sufficient cases to explore patterns and so are not necessarily attempting to be exhaustive in their searching. (Well-nigh reviews incorporate elements of both assemblage and configuration and so some may require an unbiased set of studies as well as sufficient heterogeneity to permit the exploration of differences between them).

Aggregating and configuring reviews too vary in their approach to quality assurance. All reviews aim to avoid drawing misleading conclusions because of problems in the studies they comprise. Aggregative reviews are concerned with a priori methods and their quality balls processes assess compliance with those methods. As the basis of quality assurance is known a priori, many aspects of this tin can be incorporated into the inclusion criteria of the review and and so can be further checked at a later quality assurance stage. The inclusion criteria may, for example, require only certain types of study with specific methodological features. There is less consensus in the practice of quality assessment in configurative reviews; some adopt a like strategy to those employed in aggregative reviews, whereas others reject the idea that the quality of a written report can be assessed through an examination of its method, and instead prioritize other issues, such equally relevance to the review and the contribution the study can make in the review synthesis to testing or generating theory [21–23]. Some of the differences betwixt aggregating and configuring reviews are shown in Effigy 1.

Although the logics of accumulation and configuring research findings demand different methods for reviewing, a review often includes components of both. A meta-analysis may contain a post hoc estimation of statistical associations which may exist configured to generate hypotheses for time to come testing. A configurative synthesis may include some components where information are aggregated (for example, framework synthesis) [24, 25]. Examples of reviews that are predominantly aggregative, configurative, or with high degrees of both aggregation and configuring are given in Table 1 (and for a slightly different accept on this heuristic run across Sandelowski et al.[20]).

Similarly, the nature of a review question, the assumptions underlying the question (or conceptual framework), and whether the review aggregates or configures the results of other studies may strongly advise which methods of review are advisable, but this is not always the instance. Several methods of review are applicable to a broad range of review approaches. Both thematic [26] and framework synthesis [24, 25] which place themes within narrative data can, for example, be used with both aggregative and configurative approaches to synthesis.

Reviews that are predominantly aggregative may take similar epistemological and methodological assumptions to much quantitative research and there may be like assumptions between predominantly configurative reviews and qualitative research. However, the quantitative/qualitative distinction is not precise and does not reflect the differences in the aggregative and configurative research processes; quantitative reviews may utilise configurative processes and qualitative reviews tin use aggregative processes. Some authors as well use the terms conceptual synthesis for reviews that are predominantly configurative, but the procedure of configuring in a review does not take to be express to concepts; it tin likewise be the organisation of numbers (as in subgroup analyses of statistical meta-analysis). The term 'interpretative synthesis' is also used to describe reviews where meanings are interpreted from the included studies. Yet, aggregative reviews also include interpretation, before inspection of the studies to develop criteria for including studies, and afterward synthesis of the findings to develop implications for policy, do, and farther research. Thus, the aggregate/configure framework cannot be thought of as another way of expressing the qualitative/quantitative 'divide'; information technology has a more specific meaning concerning the logic of synthesis, and many reviews have elements of both aggregation and configuration.

Further ideological and theoretical assumptions

In addition to the above is a range of bug about whose questions are being asked and the implicit ideological and theoretical assumptions driving both them and the review itself. These assumptions determine the specific choices fabricated in operationalizing the review question and thus make up one's mind the manner in which the review is undertaken, including the inquiry studies included and how they are analyzed. Ensuring that these assumptions are transparent is therefore important both for the execution of the review and for accountability. Reviews may be undertaken to inform controlling by non-academic users of research such equally policymakers, practitioners, and other members of the public and so at that place may be a wide range of different perspectives that can inform a review [27, 28]. The perspectives driving the review volition too influence the findings of the review and thereby clarify what is known and not known (within those perspectives) and thus inform what further chief enquiry is required. Both reviewer and user perspectives can thus have an ongoing influence in developing user-led inquiry agendas. There may be many different agendas and thus a plurality of both primary research and reviews of research on any given issue.

A further key issue that is related to the types of questions beingness asked and the ideological and theoretical assumptions underlying them is the ontological and epistemological position taken by the reviewers. Aggregative reviews tend to assume that at that place is (often inside disciplinary specifications/boundaries) a reality about which empirical statements can be made even if this reality is socially constructed (generalizations); in other words they take a 'realist' philosophical position (a broader concept than the specific method of 'realist synthesis'). Some configurative reviews may not crave such realist assumptions. They take a more than relativist idealist position; the involvement is not in seeking a single 'correct' reply but in examining the variation and complexity of different conceptualizations [12, 29]. These philosophical differences tin can exist important in understanding the approach taken by different reviewers just every bit they are in agreement variation in approach (and debates about research methods) in chief enquiry. These differences too chronicle to how reviews are used. Aggregative reviews are often used to make empirical statements (within agreed conceptual perspectives) to inform decision making instrumentally whilst configuring reviews are frequently used to develop concepts and enlightenment [thirty].

Structure and components of reviews

As well as varying in their questions, aims, and philosophical approach, reviews also vary in their structure. They can be single reviews that synthesize a specific literature to answer the review question. They may be maps of what research has been undertaken that are products in their ain right and also a stage on the fashion to one or more syntheses. Reviews tin can as well incorporate multiple components equating to conducting many reviews or to reviewing many reviews.

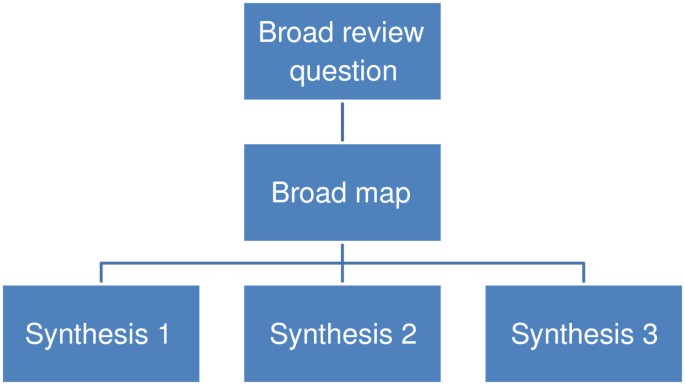

Systematic maps

To some caste, about reviews describe the studies they incorporate and thus provide a map or business relationship of the research field. Some reviews go further than this and more explicitly identify aspects of the studies that help describe the research field in some detail; the focus and extent of such clarification varying with the aims of the map. Maps are useful products in their own correct but can also exist used to inform the process of synthesis and the interpretation of the synthesis [3, 30]. Instead of automatically undertaking a synthesis of all included studies, an assay of the map may pb to a conclusion to synthesize but a subset of studies, or to conduct several syntheses in dissimilar areas of the one map. A broader initial review question and a narrower subsequent review question allows the synthesis of a narrower subset of studies to be understood within the wider literature described in terms of research topics, primary research methods, or both. Information technology besides allows broader review questions to create a map for a series of reviews (Effigy 2) or mixed methods reviews (Figure three). In sum, maps have three main purposes of: (i) describing the nature of a inquiry field; (ii) to inform the conduct of a synthesis; and (iii) to interpret the findings of a synthesis [3, 31].The term 'scoping review' is also sometimes used in a number of different ways to describe (oftentimes non-systematic) maps and/or syntheses that apace examine the nature of the literature on a topic area [32, 33]; sometimes as office of the planning for a systematic review.

A map leading to several syntheses.

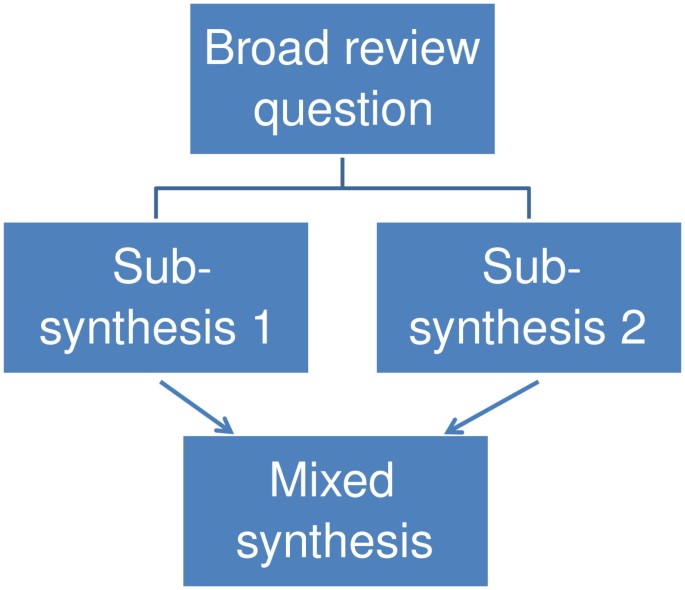

A mixed method review with three syntheses.

Mixed methods reviews

The inclusion criteria of a review may allow all types of chief research or only studies with specific methods that are considered most appropriate to best accost the review question. Including several unlike methods of chief research in a review can create challenges in the synthesis phase. For example, a review asking about the impact of some life experience may examine both randomized controlled trials and large data sets on naturally occurring phenomena (such equally in large scale cohort studies). Another strategy is to accept sub-reviews that ask questions about unlike aspects of an issue and which are likely to consider different principal research [34, 35]. For example, a statistical meta-assay of impact studies compared with a conceptual synthesis of people's views of the result being evaluated [34, 35]. The ii sub-reviews tin and then be combined and assorted in a third synthesis as in Figure 3. Mixed methods reviews have many similarities with mixed methods in primary research and at that place are therefore numerous ways in which the products of unlike synthesis methods may be combined [35].

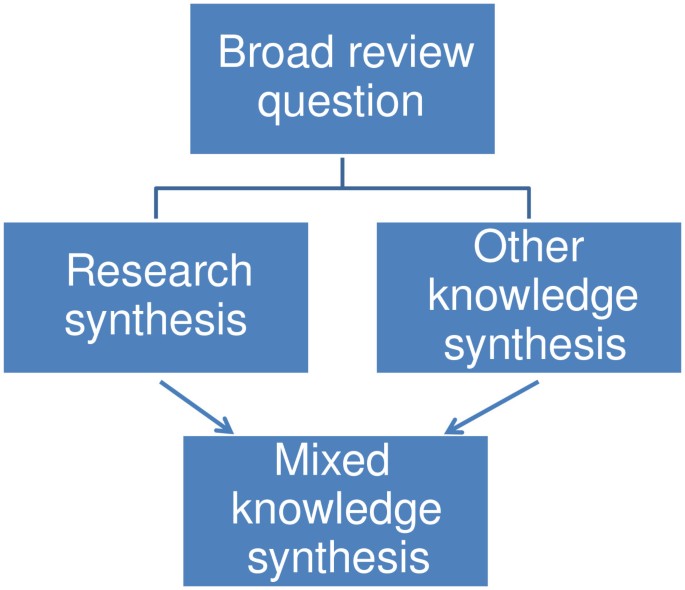

Mixed knowledge reviews use a similar approach simply combine data from previous research with other forms of information; for instance a survey of practise cognition nigh an issue (Effigy four).

Mixed cognition review.

Some other example of a mixed methods review is realist synthesis [nine] that examines the usefulness of mid-level policy interventions beyond different areas of social policy by unpacking the implicit models of change, followed by an iterative process of identifying and analyzing the evidence in support of each function of that model. This is quite similar to a theory-driven aggregative review (or series of reviews) that aggregatively exam different parts of a causal model. The first part of the process is a grade of configuration in clarifying the nature of the theory and what needs to be empirically tested; the second part is the aggregative testing of those subcomponents of the theory. The difference between this method and more than 'standard' systematic review methods is that the search for empirical bear witness is more of an iterative, investigative procedure of tracking downward and interpreting evidence. Realist synthesis volition besides consider a broad range of empirical bear witness and will assess its value in terms of its contribution rather than according to some preset criteria. The approach therefore differs from the predominantly a priori strategy used in either standard 'blackness box' or in theory driven aggregative reviews. In that location have also been attempts to combine aggregative 'what works' reviews with realist reviews [36]. These innovations are exploring how all-time to develop the breadth, generalizability and policy relevance of aggregative reviews without losing their methodological protection confronting bias.

At that place are likewise reviews that apply other pre-existing reviews every bit their source of data. These reviews of reviews may draw on the information of previous reviews either by using the findings of previous reviews or by drilling downwardly to using data from the master studies in the reviews [37]. Information drawn from many reviews can too exist mined to understand more about a research field or enquiry methods in meta-epidemiology [38]. As reviews of reviews and meta-epidemiology both use reviews as their data, they are sometimes both described as types of 'meta reviews'. This terminology may not be helpful as it links together ii approaches to reviews which have piffling in common apart from the shared blazon of information source. A further term is 'meta evaluation'. This can refer to the formative or summative evaluation of primary evaluation studies or tin can exist a summative argument of the findings of evaluations which is a form of aggregative review (Encounter Gough et al. in preparation, and [39]).

Latitude, depth, and 'work done' past reviews

Main research studies and reviews may exist read equally isolated products nonetheless they are usually one step in larger or longer-term research enterprises. A research study usually addresses a macro research issue and a specific focused sub-outcome that is addressed by its specific data and analysis [16]. This specific focus can be wide or narrow in scope and deep or not so deep in the detail in which it is examined.

Latitude of question

Many single component aggregative reviews aim for homogeneity in the focus and method of included studies. They select narrowly defined review questions to ensure a narrow methodological focus of inquiry findings. Although well justified, these decisions may atomic number 82 to each review providing a very narrow view of both research and the issue that is being addressed. A user of such reviews may need to take account of multiple narrow reviews in order to help them determine the most appropriate course of activeness.

The demand for a broader view is raised past complex questions. One case is assessing the impact of circuitous interventions. There are often many variants of an intervention, but fifty-fifty inside one detail highly specified intervention there may be variations in terms of the frequency, duration, degree, engagement, and allegiance of commitment [xl]. All of this variation may result in unlike furnishings on different participants in different contexts. The variation may also bear upon differentially within the hypothesized program theory of how the intervention impacts on unlike causal pathways. Reviews therefore need a strategy for how they can appoint with this complication. Ane strategy is to achieve latitude through multi-component reviews; for example, a broad map which can provide the context for interpreting a narrower synthesis, a series of related reviews, or mixed methods reviews. Other strategies include 'mega reviews', where the results from very many primary studies or meta-analyses are aggregated statistically (for example, [41, 42]) and multivariate analyses, where moderator variables are used to identify the 'active ingredients' of interventions (for example, [43, 44]). Whether breadth is accomplished within a single review, from a sequence of reviews, from reviews of reviews, or from relating to the primary and review piece of work of others, the bike of primary inquiry production and synthesis is part of a wider circle of engagement and response to users of research [45].

Review resources and breadth and depth of review

The resource required for a systematic review are not fixed. With dissimilar amounts of resource ane can achieve different types of review. Broad reviews such as mixed methods and other multi-component reviews are probable to require more resources, all else being abiding, than narrow single method reviews. Thus, in addition to the breadth of review is the issue of its depth, or the detail with which it is undertaken. A broad review may not take greater resources than a narrow review in which case those resources are spread more thinly and each attribute of that breadth may be undertaken with less depth.

When time and other resources are very restricted then a rapid review may be undertaken where some aspect of the review will exist express; for example, breadth of review question, sources searched, data coded, quality and relevance balls measures, and depth of analysis [46, 47]. Many students, for example, undertake literature reviews that may be informed past systematic review principles of rigor and transparency of reporting; some of these maybe relatively minor exercises whilst others make up a substantial component of the thesis. If rigor of execution and reporting are reduced likewise far then it may be more advisable to characterize the work as non systematic scoping than as a systematic review.

Reviews thus vary in the extent that they appoint with a research issue. The enterprise may range in size from, for instance, a specific program theory to a whole field of research. The enterprise may be under study by i inquiry squad, by a broader group such equally a review grouping in an international collaboration or be the focus of report by many researchers internationally. The enterprises may be led academic disciplines, practical review collaborations, by priority setting agendas, and by forums to enable dissimilar perspectives to be engaged in research agendas. Whatever the nature of the strategic content or process of these macro research problems, reviews vary in the extent that they plan to contribute to such more macro questions. Reviews thus vary in the extent that this research piece of work is done inside a review; rather than earlier and after a review (by main studies or by other reviews).

Reviews tin exist undertaken with unlike levels of skill, efficiency, and automated tools [48] and and so resources do not equate exactly with the 'work washed' in progressing a research issue. In general, a broad review with relatively footling depth (providing a systematic overview) may be comparable in work done to a detailed narrow review (as in many current statistical meta-analyses). A multi-component review addressing complex questions using both aggregative and configuring methods may exist attempting to achieve more work, though in that location may exist challenges in terms of maintaining consistency or transparency of item in each component of the review. In contrast, a rapid review has few resources and and then is attempting less than other reviews just there may be dangers that the express scope (and limited contribution to the broader research agenda) is non understood by funders and users of the review. How best to use bachelor resource is a strategic issue depending upon the nature of the review question, the state of the enquiry available on that issue and the knowledge virtually that land of the enquiry. Information technology is an issue of beingness fit for purpose. A review doing insufficiently footling 'work' may be exactly what is needed in i situation merely not in another.

Decision

Explicit accountable methods are required for primary research and reviews of research. This logic applies to all enquiry questions and thus multiple methods for reviews of enquiry are required, just every bit they are required for primary research. These differences in types of reviews reflect the richness of primary research non only in the range of variation but also in the philosophical and methodological challenges that they pose including the mixing of different types of methods. The dominance of one grade of review question and review method and the branding of some other forms of review does non clearly describe the variation in review designs and methods and the similarities and differences between these methods. Clarity about the dimensions along which reviews vary provides a way to develop review methods farther and to make disquisitional judgments necessary for the commission, production, evaluation, and use of reviews. This paper has argued for the need for clarity in describing the blueprint and methods of systematic reviews forth many dimensions; and that particularly useful dimensions for planning, describing, and evaluating reviews are:

- 1.

Review aims and approach: (i) approach of the review: ontological, epistemological, theoretical, and ideological assumptions of the reviewers and users of the review including any theoretical manner; (two) review question: the type of answer that is existence sought (and the type of information that would answer it); and (ii) assemblage and configuration: the relative use of these logics and strategies in the different review components (and the positioning of theory in the review process, the degree of homogeneity of information, and the iteration of review method).

- 2.

Structure and components of reviews: (4) the systematic map and synthesis components of the review; and (v) the relation between these components.

- 3.

Breadth, depth, and 'work done' past reviews: (vi) macro enquiry strategy: the positioning of the review (and resources and the work aimed to be washed) inside the land of what is already known and other inquiry planned past the review team and others; and (vii) the resources used to attain this.

Clarifying some of the main dimensions along which reviews vary can provide a framework within which clarification of more detailed aspects of methodology can occur; for example, the specific strategies used for searching, identifying, coding, and synthesizing evidence and the use of specific methods and techniques ranging from review management software to text mining to statistical and narrative methods of analysis. Such clearer descriptions may pb in time to a more overarching organisation of terminology for systematic reviews.

Authors' information

DG, JT, and So are all directors of the Prove for Policy and Do Data and Coordinating Centre (EPPI-Centre) [49].

References

-

Cooper H, Hedges L: The Handbook of Research Synthesis. 1994, Russell Sage Foundation, New York

-

Gough D: Dimensions of difference in evidence reviews (Overview; I. Questions, show and methods; Ii.Breadth and depth; 3. Methodological approaches; IV. Quality and relevance appraisement; V. Advice, interpretation and application. Series of half dozen posters presented at National Centre for Research Methods coming together, Manchester. January 2007, EPPI-Heart, London,http://eppi.ioe.ac.uk/cms/Default.aspx?tabid=1919,

-

Gough D, Thomas J: Commonality and diversity in reviews. Introduction to Systematic Reviews. Edited by: Gough D, Oliver South, Thomas J. 2012, Sage, London, 35-65.

-

Chalmers I, Hedges L, Cooper H: A cursory history of research synthesis. Eval Wellness Professions. 2002, 25: 12-37. ten.1177/0163278702025001003.

-

Bohlin I: Formalising syntheses of medical knowledge: the rise of meta-analysis and systematic reviews. Perspect Sci. in press, in press

-

Noblit G: Hare RD: Meta-ethnography: synthesizing qualitative studies. 1988, Sage Publications, Newbury Park NY

-

Noyes J, Popay J, Pearson A, Hannes G, Booth A: Qualitative enquiry and Cochrane reviews. Cochrane Handbook for Systematic Reviews of Interventions. Edited by: Higgins JPT, Green Southward. Version five.i.0 (updated March 2011). The Cochrane Collaboration. www.cochrane-handbook.org

-

Dixon-Woods M, Cavers D, Agarwal S, Annandale Due east, Arthur A, Harvey J, Hsu R, Katbamna S, Olsen R, Smith L, Riley R, Sutton AJ: Conducting a critical interpretive synthesis of the literature on access to healthcare by vulnerable groups. BMC Med Res Methodol. 2006, 6: 35-10.1186/1471-2288-6-35.

-

Pawson R: Evidenced-based policy: a realist perspective. 2006, Sage, London

-

Shepperd S, Lewin S, Struas S, Clarke M, Eccles M, Fitzpatrick R, Wong G, Sheikh A: Can we systematically review studies that evaluate complex interventions?. PLoS Med. 2009, 6: eight-10.1371/journal.pmed.1000008.

-

Greenhalgh T, Robert 1000, Macfarlane F, Bate P, Kyriakidou O, Peacock R: Storylines of research in improvidence of innovation: a meta-narrative arroyo to systematic review. Soc Sci Med. 2005, 61: 417-430. ten.1016/j.socscimed.2004.12.001.

-

Barnett-Folio E, Thomas J: Methods for the synthesis of qualitative inquiry: a critical review. BMC Med Res Methodol. 2009, ix: 59-10.1186/1471-2288-9-59.

-

Moher D, Liberati A, Tetzlaff J, Altman DG, The PRISMA Group: Preferred reporting items for systematic reviews and meta-analyses: the PRISMA Statement. PLoS Med. 2009, 6: 6-ten.1371/journal.pmed.1000006.

-

PLoS Medicine Editors: Best practice in systematic reviews: The importance of protocols and registration. PLoS Med. 2011, 8: 2-

-

Thomas Thou: Introduction: evidence and do. Evidence-based Practice in Education. Edited by: Pring R, Thomas G. 2004, Open University Press, Buckingham, 44-62.

-

Gough D, Oliver South, Newman M, Bird K: Transparency in planning, warranting and interpreting research. Teaching and Learning Research Conference 78. 2009, Pedagogy and Learning Research Plan, London

-

Strauss A, Corbin J: Nuts of qualitative inquiry, grounded theory procedures and techniques. 1990, Sage, London

-

Miles M, Huberman A: Qualitative Data Analysis. 1994, Sage, London

-

Voils CI, Sandelowski M, Barroso J, Hasselblad V: Making sense of qualitative and quantitative findings in mixed research synthesis studies. Field Methods. 2008, 20: three-25. 10.1177/1525822X07307463.

-

Sandelowski 1000, Voils CJ, Leeman J, Crandlee JL: Mapping the Mixed Methods-Mixed Enquiry Synthesis Terrain. Journal of Mixed Methods Research. 2011, 10.1177/1558689811427913.

-

Pawson R, Boaz A, Grayson Fifty, Long A, Barnes C: Types and Quality of Cognition in Social Care. 2003, Social Care Institute for Excellence, London

-

Oancea A, Furlong J: Expressions of excellence and the assessment of applied and practise-based inquiry. Res Pap Educ. 2007, 22: 119-137. 10.1080/02671520701296056.

-

Harden A, Gough D: Quality and relevance appraisal. Introduction to Systematic Reviews. Edited by: Gough D, Oliver S, Thomas J. 2012, Sage, London, 153-178.

-

Thomas J, Harden A: Methods for the thematic synthesis of qualitative research in systematic reviews. BMC Med Res Methodol. 2008, viii: 45-ten.1186/1471-2288-8-45.

-

Oliver South, Rees RW, Clarke-Jones L, Milne R, Oakley AR, Gabbay J, Stein Thou, Buchanan P, Gyte Chiliad: A multidimensional conceptual framework for analyzing public involvement in health services enquiry. Heal Expect. 2008, 11: 72-84. 10.1111/j.1369-7625.2007.00476.10.

-

Carroll C, Booth A, Cooper K: A worked example of "best fit" framework synthesis: a systematic review of views concerning the taking of some potential chemopreventive agents. BMC Med Res Methodol. 2011, 11: 29-10.1186/1471-2288-xi-29.

-

Rees R, Oliver Southward: Stakeholder perspectives and participation in reviews. Introduction to Systematic Reviews. Edited past: Gough D, Oliver S, Thomas J. 2012, Sage, London, 17-34.

-

Oliver S, Dickson Thousand, Newman Grand: Getting started with a review. Introduction to Systematic Reviews. Edited by: Gough D, Oliver Due south, Thomas J. 2012, Sage, London, 66-82.

-

Spencer 50, Ritchie J, Lewis J, Dillon L: Quality in Qualitative Evaluation: a Framework for Assessing Research Evidence. 2003, Regime Chief Social Researcher's Office, London

-

Weiss C: The many meanings of research utilisation. Public Adm Rev. 1979, 29: 426-431.

-

Peersman G: A Descriptive Mapping of Wellness Promotion Studies in Immature People EPPI Research Study. 1996, EPI-Centre, London

-

Arksey H, O'Malley L: Scoping Studies: towards a methodological framework. Int J Soc Res Methodol. 2005, 8: 19-32. x.1080/1364557032000119616.

-

Levac D, Colquhoun H, O'Brien KK: Scoping studies: advancing the methodology. Implement Sci. 2010, 5: 69-10.1186/1748-5908-5-69.

-

Thomas J, Harden A, Oakley A, Oliver S, Sutcliffe M, Rees R, Brunton G, Kavanagh J: Integrating qualitative research with trials in systematic reviews: an example from public wellness. Brit Med J. 2004, 328: 1010-1012. 10.1136/bmj.328.7446.1010.

-

Harden A, Thomas J: Mixed methods and systematic reviews: examples and emerging issues. Handbook of Mixed Methods in the Social and Behavioral Sciences. Edited by: Tashakkori A, Teddlie C. 2010, Sage, London, 749-774. 2

-

Leontien One thousand, van der Knaap , Leeuw FL, Bogaerts South, Laura TJ: Nijssen Combining campbell standards and the realist evaluation approach: the best of two worlds?. J Eval. 2008, 29: 48-57. ten.1177/1098214007313024.

-

Smith V, Devane D, Begley CM, Clarke M: Methodology in conducting a systematic review of systematic reviews of healthcare interventions. BMC Med Res Methodol. 2011, 11: 15-x.1186/1471-2288-11-xv.

-

Oliver S, Bagnall AM, Thomas J, Shepherd J, Sowden A, White I, Dinnes J, Rees R, Colquitt J, Oliver K, Garrett Z: RCTs for policy interventions: a review of reviews and meta-regression. Health Technol Assess. 2010, 14: 16-

-

Scriven M: An introduction to meta-evaluation. Educational Products Report. 1969, 2: 36-38.

-

Carroll C, Patterson M, Wood S, Berth A, Rick J, Balain Southward: A conceptual framework for implementation allegiance. Implement Sci. 2007, 2: twoscore-10.1186/1748-5908-2-40.

-

Smith ML, Glass GV: Meta-assay of psychotherapy outcome studies. Am Psychol. 1977, 32: 752-760.

-

Hattie J: Visible Learning: A Synthesis of Over 800 Meta-Analyses Relating to Achievement. 2008, Routledge, London

-

Cook TD, Cooper H, Cordray DS, Hartmann H, Hedges LV, Low-cal RJ, Louis TA, Mosteller F: Meta-assay for Explanation: A Casebook. 1992, Russell Sage Foundation, New York

-

Thompson SG, Sharp SJ: Explaining heterogeneity in meta-analysis: a comparison of methods. Stat Med. 1999, 18: 2693-2708. 10.1002/(SICI)1097-0258(19991030)18:20<2693::Assist-SIM235>three.0.CO;2-V.

-

Stewart R, Oliver South: Making a difference with systematic reviews. Introduction to Systematic Reviews. Edited by: Gough D, Oliver Due south, Thomas J. 2012, Sage, London, 227-244.

-

Government Social Enquiry Unit: Rapid Evidence Assessment Toolkit. 2008,http://www.civilservice.gov.uk/networks/gsr/resources-and-guidance/rapid-evidence-assessment,

-

Abrami PC, Borokhovski Due east, Bernard RM, Wade CA, Tamim R, Persson T, Surkes MA: Issues in conducting and disseminating cursory reviews of evidence. Evidence & Policy: A Journal of Inquiry, Argue and Do. 2010, 6: 371-389. ten.1332/174426410X524866.

-

Brunton J, Thomas J: Information management in reviews. Introduction to Systematic Reviews. Edited by: Gough D, Oliver South, Thomas J. 2012, Sage, London, 83-106.

-

Evidence for Policy and Practice Information and Coordinating Centre (EPPI-Centre):http://eppi.ioe.air conditioning.uk,

Acknowledgements

The authors wish to acknowledge the back up and intellectual contribution of their previous and current colleagues at the EPPI-Heart. They also wish to acknowledge the back up of their major funders every bit many of the ideas in this newspaper were developed whilst working on research supported by their grants; this includes the Economical and Social Inquiry Council, the Department of Health, and the Section for Teaching. The views expressed here are those of the authors and are not necessarily those of our funders.

Author data

Affiliations

Respective writer

Additional information

Competing interests

The authors declare that they accept no competing interests.

Authors' contributions

All iii authors have made substantial contributions to the conception of the ideas in this paper, have been involved in drafting or revising it critically for of import intellectual content, and have given terminal approval of the version to be published.

Authors' original submitted files for images

Rights and permissions

This article is published under license to BioMed Cardinal Ltd. This is an Open Access article distributed under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

Reprints and Permissions

About this article

Cite this article

Gough, D., Thomas, J. & Oliver, Due south. Clarifying differences between review designs and methods. Syst Rev ane, 28 (2012). https://doi.org/10.1186/2046-4053-1-28

-

Received:

-

Accustomed:

-

Published:

-

DOI : https://doi.org/10.1186/2046-4053-1-28

Keywords

- Aggregation configuration

- Complex reviews

- Mapping

- Methodology

- Mixed methods reviews

- Research methods

- Scoping reviews

- Synthesis

- Systematic reviews

- Taxonomy of reviews

Source: https://systematicreviewsjournal.biomedcentral.com/articles/10.1186/2046-4053-1-28

0 Response to "An Introduction to Systematic Reviews by David Gough Chapter 5"

Post a Comment